Your programmatic campaign delivered 10 million impressions last month. Viewability hit 72%. Your dashboard looks great.

So why didn’t anyone remember seeing your ad?

Because we’ve built a $500 billion industry around measuring the wrong thing. Programmatic platforms are incredible at getting your ad in front of people. They’re terrible at ensuring anyone actually pays attention to it. We can target users based on thousands of data points, optimize bids in milliseconds, and serve personalized creative at scale. The entire infrastructure is built on a flawed assumption about what attention actually means.

Most marketers don’t realize this, but the whole system optimizes for viewability metrics and click-through rates while ignoring whether anyone actually processed the message. The ad tech industry has spent billions perfecting the delivery mechanism. The cognitive science of attention? That’s an afterthought. This creates a massive gap between what programmatic platforms measure and what actually drives business outcomes.

I’m going to show you why attention quality (not just attention metrics) should reshape your entire programmatic strategy, and why the current obsession with impressions and viewability is leaving money on the table.

Table of Contents

-

The Attention Economy Myth That’s Draining Your Programmatic Spend

-

Why Viewability Standards Miss the Point Entirely

-

Cognitive Load Theory Applied to Programmatic Creative

-

The Contextual Relevance Gap in Automated Buying

-

Attention Decay Across Different Programmatic Channels

-

Measuring What Matters Beyond Impressions

-

Restructuring Your Programmatic Stack for Attention Quality

TL;DR

Quick version: Your programmatic ads are “viewable” but nobody’s actually looking at them. We’re measuring the wrong thing.

Programmatic platforms optimize for viewability and impressions but these metrics don’t correlate with actual message processing or brand recall. Current attention measurement focuses on duration and placement while ignoring cognitive load and contextual interference.

The automation that makes programmatic efficient also strips away the contextual understanding that determines whether attention translates to memory formation. Different programmatic channels create vastly different cognitive environments, yet most strategies treat attention as a universal metric.

Restructuring campaigns around attention quality rather than attention quantity requires new measurement frameworks and creative approaches. The gap between technical targeting capabilities and attention science? That’s the biggest untapped opportunity in programmatic advertising.

The Attention Economy Myth That’s Draining Your Programmatic Spend

We’ve been sold a story about the attention economy that sounds compelling but crumbles under scrutiny. The narrative goes like this: attention is scarce, programmatic technology helps you capture it efficiently, and viewability metrics prove you’re succeeding.

Except none of that addresses whether the attention you’re buying actually matters.

Programmatic platforms have gotten incredibly good at placing ads where eyes might land. They’ve mastered the technical challenge of serving the right ad to the right person at the right time. What they haven’t solved (and what most advertisers aren’t even asking about) is whether that moment of potential exposure creates any meaningful cognitive engagement.

Understanding what is programmatic advertising at a technical level is straightforward: it’s the automated buying and selling of digital ad inventory through technology platforms. The complexity lies not in the definition but in the assumption that technical precision equals marketing effectiveness.

The Technical Achievement We Celebrate Versus the Business Outcome We Need

Programmatic advertising is one of the most impressive technical achievements in marketing history. Real-time bidding happens in under 100 milliseconds. Audience segmentation can incorporate thousands of behavioral signals. Dynamic creative optimization can personalize messaging based on weather, location, device, and browsing history simultaneously.

All of this sophistication serves one primary goal: getting your ad in front of someone who might care.

And that’s exactly where we screw up. We’ve built an entire industry around optimizing the probability of exposure while treating the quality of that exposure as a secondary concern. The platforms report back with metrics that sound meaningful. Viewability rates, time in view, completion rates for video. These numbers create the illusion of attention measurement, but they’re really just measuring opportunity for attention.

A banner ad that meets the IAB viewability standard (50% of pixels in view for one second) tells you almost nothing about whether the viewer processed your message, formed any memory of your brand, or experienced any shift in perception.

Programmatic ad buying has become so focused on the mechanics of delivery that we’ve forgotten to question whether delivery equals impact. The technology works brilliantly at what it was designed to do. We’ve confused technical success with marketing success.

Take this example: A financial services company running programmatic display campaigns targeting high-income professionals. Their dashboard shows 75% viewability rates and millions of impressions delivered. The metrics look excellent. When they conduct brand lift studies, they discover almost no improvement in aided or unaided awareness.

The problem wasn’t the targeting or the creative quality. Their ads were appearing in high-stress news environments where users were scrolling rapidly through anxiety-inducing content. The ads were technically viewable, but the cognitive state of the audience made meaningful processing nearly impossible.

When they shifted budget toward contextually relevant financial content where users were already in a planning mindset, impression volume dropped by 40% but brand lift increased by 180%. They spent billions perfecting the delivery mechanism. Understanding real programmatic advertising results reveals this fundamental gap between technical sophistication and business outcomes.

What Cognitive Science Says About Attention That Programmatic Ignores

Attention isn’t binary. You don’t either have someone’s attention or not have it.

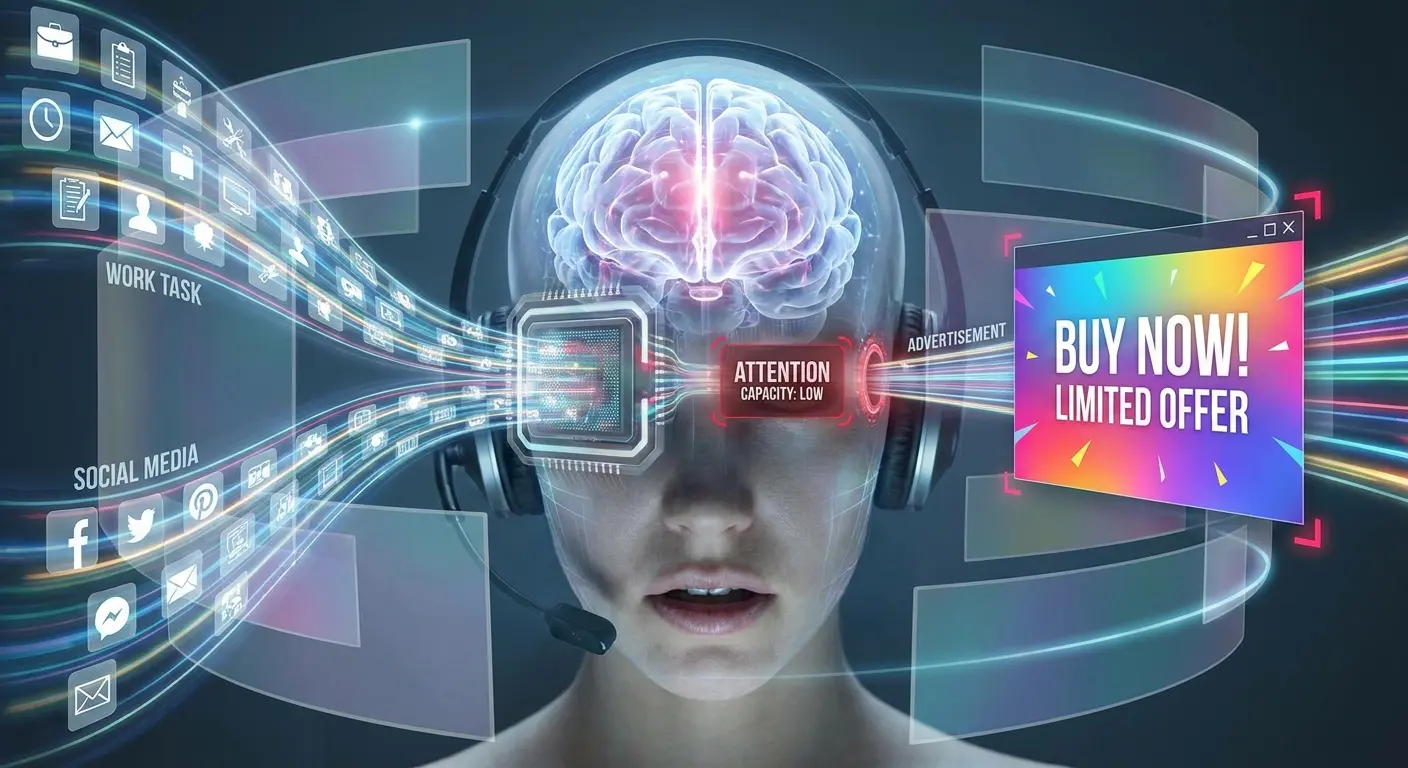

Cognitive psychologists distinguish between multiple types and levels of attention, each with different implications for memory formation and behavior change. Sustained attention (the ability to maintain focus over time) differs fundamentally from selective attention (choosing what to focus on amid distractions). Both differ from divided attention (attempting to process multiple streams of information simultaneously).

Programmatic platforms don’t distinguish between these states. They produce wildly different outcomes for advertisers.

Someone scrolling through a social feed experiences divided attention by default. They’re processing multiple competing stimuli while also managing their own browsing intentions. An ad that achieves viewability in this context faces cognitive competition that dramatically reduces the likelihood of meaningful processing. The viewer might register that an ad appeared without encoding any specific information about the brand or message.

Contrast this with someone reading an article who encounters a contextually relevant ad. The cognitive state is different. Sustained attention is already engaged, divided attention is reduced, and if the ad connects to the content context, selective attention might actually favor the ad rather than treating it as an interruption.

What is programmatic technology missing? The ability to distinguish between these scenarios in any meaningful way. Current systems optimize for the presence of the ad in the visual field, not for the cognitive state of the person viewing it.

The Measurement Problem That Perpetuates Bad Strategy

We optimize what we measure, and programmatic advertising has settled on metrics that are easy to track rather than metrics that predict outcomes.

This isn’t just a minor inefficiency. It’s systematically directing budget toward low-quality attention while starving high-quality attention opportunities.

Viewability became the standard because it was technically feasible to measure at scale. The IAB could create a definition, verification vendors could build technology to track it, and advertisers could include it in their contracts. The metric solved a legitimate problem (ads that never had any chance of being seen), but then became treated as a proxy for effectiveness rather than a minimum threshold for potential effectiveness.

Click-through rates face similar issues. They measure one specific type of engagement (active clicking) while ignoring the reality that most advertising works through passive exposure and memory formation rather than immediate response. Optimizing programmatic campaigns for CTR often means optimizing for the wrong outcome entirely, unless your goal is direct response and nothing else.

The platforms themselves have little incentive to push beyond these metrics. Viewability and CTR are defensible, standardized, and keep the focus on delivery rather than outcomes. Attention quality is messy, harder to define, and would require acknowledging that not all impressions carry equal value even when they meet current measurement standards.

Programmatic advertising has created a measurement ecosystem that serves everyone except the advertiser trying to drive actual business results.

Why Viewability Standards Miss the Point Entirely

Viewability metrics were created to solve a specific problem: ads that were technically served but never had any realistic chance of being seen. Publishers were selling inventory that loaded below the fold, in hidden iframes, or in placements that users never scrolled to. Advertisers were paying for impressions that were impressions in name only.

The IAB’s viewability standards addressed this by creating minimum thresholds. For display ads, 50% of pixels must be in view for at least one continuous second. For video, 50% of pixels for two continuous seconds. These standards gave advertisers a way to verify that their ads at least entered the visual field.

What happened next is predictable if you understand how industries respond to measurement. Viewability stopped being a minimum threshold and became the goal.

Campaigns got optimized for viewability rates, publishers designed placements to maximize viewability, and the entire ecosystem reoriented around hitting these numbers.

The One-Second Window That Tells You Almost Nothing

One second is an arbitrary technical compromise, not a meaningful cognitive threshold. The IAB chose it because it was trackable and seemed reasonable, not because research showed that one second of potential exposure produces reliable memory formation or brand impact.

Human visual processing happens quickly. We can register the presence of an object in as little as 100 milliseconds. But registering presence differs entirely from processing meaning.

Reading and comprehending a headline takes longer. Evaluating whether an offer is relevant takes longer still. Forming any kind of emotional response or brand association requires sustained processing that one second rarely provides.

Programmatic campaigns optimized for viewability often serve ads in contexts where the one-second threshold gets met technically but nothing meaningful happens cognitively. The ad appears in the viewport as the user scrolls past. It’s viewable by the measurement standard, but the user’s attention was directed elsewhere and no processing occurred.

We’re paying for exposure that meets a technical definition while producing minimal cognitive impact. The viewability metric creates the appearance of accountability without actually measuring what matters.

How Optimizing for Viewability Actively Degrades Attention Quality

Chasing high viewability rates pushes programmatic budgets toward specific types of placements and contexts. Top-of-page positions, sticky ads that follow scroll, interstitials that block content. These formats achieve high viewability scores because they’re hard to miss, but they’re also formats that users have learned to tune out or actively resent.

Banner blindness isn’t just a catchy phrase. It’s a documented phenomenon where users develop cognitive strategies to avoid processing ads even when those ads are clearly visible.

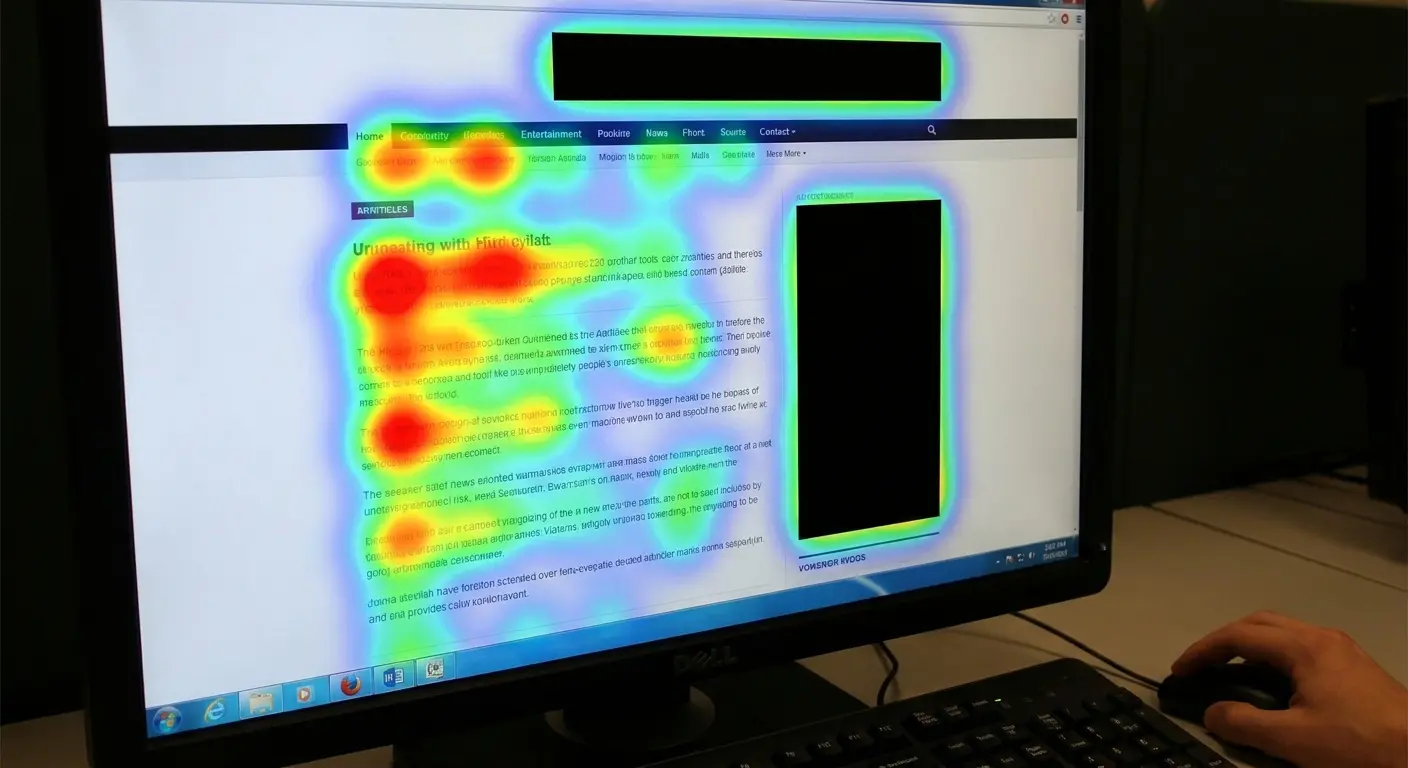

Eye-tracking studies consistently show that users can look directly at an ad space without any neural processing of the ad content. The visual information enters the eye but gets filtered out before reaching conscious awareness.

Programmatic systems can’t detect banner blindness. They can verify that the ad was in the viewport and that pixels were visible, but they can’t measure whether the user’s visual attention actually engaged with the ad or whether their brain filtered it out as irrelevant noise.

Worse, the formats that maximize viewability often create negative attention. An interstitial that blocks content the user wants to access generates attention, but it’s attention coupled with frustration and brand negativity. The viewability metric treats this as success while the actual cognitive outcome works against your brand objectives.

|

Viewability Metric |

What It Actually Measures |

What It Doesn’t Measure |

|---|---|---|

|

50% of pixels visible for 1 second |

Ad entered the viewport during scroll |

Whether user’s eyes fixated on the ad |

|

Time in view |

Duration ad remained in viewport |

Whether cognitive processing occurred |

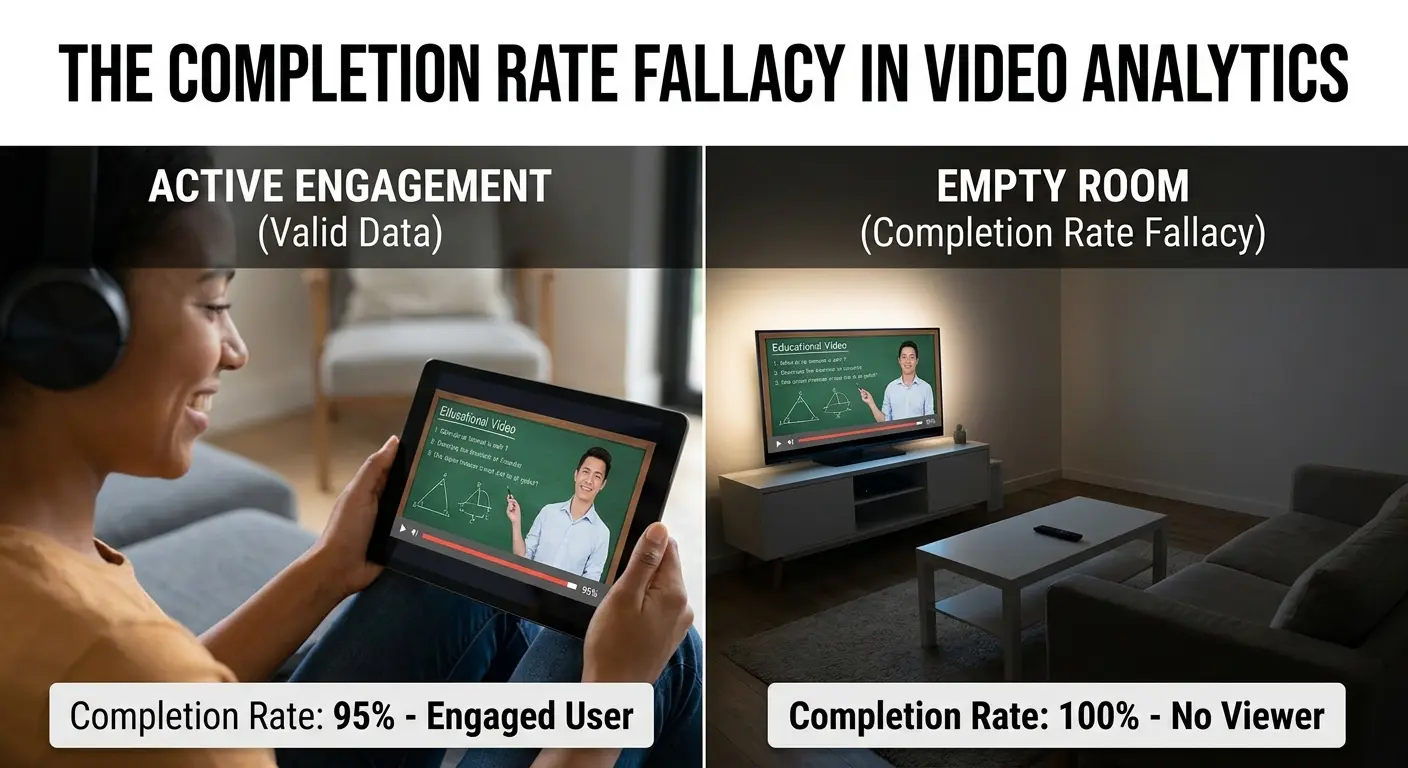

|

Completion rate (video) |

User didn’t skip or close |

Whether user was paying attention or left device |

|

Above-the-fold placement |

Ad loaded in initially visible area |

Whether user experienced banner blindness |

|

Sticky ad engagement |

Ad remained visible during scroll |

Whether visibility created positive or negative brand sentiment |

The False Equivalence Between Different Viewable Impressions

Programmatic reporting treats viewable impressions as fungible. One viewable impression equals another viewable impression, regardless of context, cognitive state, or competitive interference.

Convenient? Sure. Accurate? No.

A viewable impression in a contextually relevant article where the user is engaged and processing information differs fundamentally from a viewable impression in an auto-play video feed where the user is passively scrolling. Both meet the viewability standard, but the cognitive opportunity for your message differs by orders of magnitude.

The context surrounding the ad determines how much cognitive capacity is available for processing it. Users reading long-form content have typically committed to sustained attention. Their cognitive resources are engaged but directed, and a relevant ad can benefit from that attentional state.

Users scrolling through short-form content feeds are in a different mode entirely, processing rapidly and shallowly, filtering aggressively, and unlikely to shift into deeper processing for an ad.

Programmatic platforms can target these different contexts, but they don’t price them according to attention quality. A viewable impression is a viewable impression, and optimization algorithms push budget toward whatever achieves the target metrics most efficiently. This systematically undervalues high-quality attention contexts and overvalues low-quality ones.

Cognitive Load Theory Applied to Programmatic Creative

Cognitive load theory explains how humans process and store information. We have limited working memory capacity, and when we exceed that capacity, learning and memory formation degrade rapidly.

This has direct implications for programmatic advertising that almost no one is addressing.

Most programmatic creative gets designed without any consideration of the cognitive load already present in the viewing environment. Advertisers focus on making their message clear and compelling in isolation, then deploy that creative across contexts with wildly different existing cognitive demands.

The Cognitive Environment Your Ads Actually Appear In

Users encountering your programmatic ads aren’t sitting in a neutral cognitive state waiting to process your message. They’re already engaged in some task or content consumption that’s consuming cognitive resources.

Your ad is an additional cognitive demand competing for limited processing capacity.

Social media feeds mess with your brain. That’s not an accident. They’re built to keep you scrolling through multiple pieces of content rapidly, making constant relevance judgments, and managing your own social-emotional responses to what you see. Adding an ad to this environment means adding to an already taxed cognitive system.

Content sites vary enormously in the cognitive load they create. A complex news article about economic policy creates high cognitive load as readers work to understand the information. A listicle with simple entertainment content creates much lower cognitive load.

The same ad creative will perform differently in these contexts because the available cognitive capacity differs.

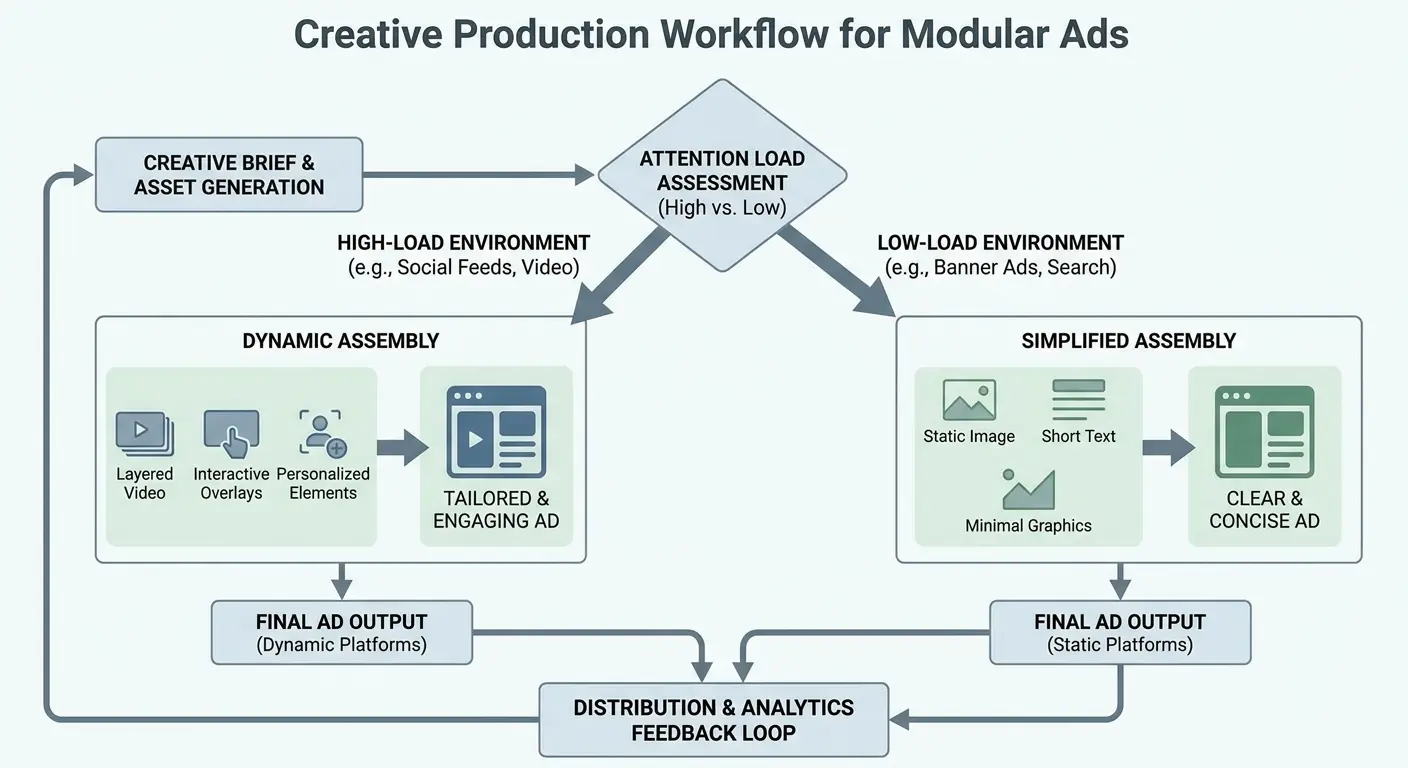

Programmatic systems can target these different environments, but the creative typically remains static. We’re not adjusting message complexity based on the cognitive load of the placement context. We’re serving the same ad whether the user has abundant cognitive capacity or is already at their processing limit.

A luxury automotive brand was running the same 15-second video ad across all programmatic placements. The creative featured beautiful cinematography, subtle brand messaging, and required viewers to connect multiple visual metaphors to understand the value proposition.

Performance varied wildly by placement. The ad performed exceptionally well on premium automotive content sites where users were already in a research mindset

with cognitive capacity to process complex messaging. The same creative failed completely in social feed placements where users were scrolling rapidly through mixed content.

The brand wasn’t getting worse reach in social feeds, they were getting the wrong kind of reach. They developed a simplified version for high-cognitive-load environments that stated the value proposition directly in the first three seconds, with immediate brand identification. Social feed performance improved by 220% while premium content performance remained strong with the original creative.

Why Complexity in Creative Backfires in High-Load Environments

Marketers often equate creative sophistication with effectiveness. Clever concepts, multiple message layers, subtle brand storytelling. These approaches can work beautifully when the viewer has cognitive capacity to process them.

In high-load programmatic environments, they fail predictably.

Complex creative requires sustained attention and available working memory to decode. The viewer needs to notice the ad, shift attention to it, process the visual and textual elements, integrate them into a coherent message, and connect that message to existing brand knowledge. Each step consumes cognitive resources.

When the viewing environment already has high cognitive load, users don’t have the resources to complete this process. They might register that an ad appeared, but they won’t process the clever concept or remember the nuanced message. The sophistication you invested in becomes wasted effort because the cognitive conditions for processing it don’t exist.

Simple, direct creative often outperforms clever creative in programmatic contexts. It’s not that users prefer simple messages. It’s that simple messages require less cognitive effort to process, making them more likely to succeed in high-load environments.

Matching Creative Complexity to Available Cognitive Capacity

Smart programmatic strategy would vary creative complexity based on the cognitive load of the placement context. High-load environments (social feeds, busy content sites, mobile contexts) should receive simple, direct creative that can be processed with minimal cognitive effort.

Low-load environments (engaged content consumption, desktop contexts with sustained attention) can support more complex messaging.

Current programmatic platforms don’t make this easy. Dynamic creative optimization typically varies elements like product images or headlines based on user characteristics, not based on the cognitive environment of the placement. The technology to do this exists, but the strategic thinking around it remains underdeveloped.

We’re leaving performance on the table by treating creative as independent from cognitive context. An ad that works brilliantly in one cognitive environment can fail completely in another, not because the creative is bad but because it’s mismatched to the available processing capacity.

Creative Complexity Assessment Checklist

I use this framework with every client. It catches 90% of creative-context mismatches before they waste budget:

-

Message Processing Time: Can the core message be understood in under 2 seconds? (Required for high-load environments)

-

Visual Complexity: Does the creative require interpreting multiple visual elements to understand the offer? (Simplify for mobile and social)

-

Brand Identification: Is your brand visible and identifiable in the first frame? (Critical when attention duration is uncertain)

-

Cognitive Steps Required: How many mental steps must a viewer take to connect your creative to a relevant need? (Limit to 1-2 steps in high-load contexts)

-

Audio Dependency: Does the message require sound to be understood? (Assume sound-off for most programmatic video)

-

Text Volume: Can all text be read in the guaranteed viewable time? (Reduce text for 1-second viewability thresholds)

-

Call-to-Action Clarity: Is the desired action immediately obvious without interpretation? (Essential across all environments)

Don’t obsess over checking every box. Use this as a gut-check, not a straitjacket.

The Contextual Relevance Gap in Automated Buying

Programmatic advertising automated the buying process, which brought massive efficiency gains but also stripped away human judgment about contextual fit. Algorithms optimize for audience targeting and performance metrics without understanding whether the ad makes sense in the specific content context where it appears.

We’ve gotten very good at finding the right person and very bad at considering whether the right moment actually exists within that person’s current experience.

When Precise Audience Targeting Creates Contextual Disasters

Programmatic platforms can target users with incredible precision. Behavioral history, demographic data, purchase intent signals, location, device, time of day. You can define your audience with hundreds of parameters and reach them wherever they appear online.

This precision creates a false sense of control. You’re reaching the right person, so the placement must be effective.

Except the right person in the wrong context produces terrible outcomes that your metrics might not even capture.

Someone researching vacation destinations is a valuable audience for travel advertisers. Programmatic systems can identify these users and serve them travel ads across the web. But what if the ad appears while they’re reading about a plane crash? The audience targeting is perfect, the contextual fit is disastrous, and the brand association formed in that moment works against you.

These contextual disasters happen constantly in programmatic advertising because the automation prioritizes audience over context. The algorithm found the right person and served the ad, mission accomplished. The fact that the contextual environment made the ad ineffective or actively harmful doesn’t register in the optimization process.

The Semantic Understanding That Algorithms Still Lack

Natural language processing has improved dramatically, and programmatic platforms now offer contextual targeting based on page content. But semantic understanding remains shallow compared to human comprehension.

Algorithms can identify that an article is about cars, but they struggle with nuance, tone, and implication.

An article celebrating new electric vehicle technology creates a positive context for automotive advertising. An article about automotive recalls creates a negative context. An article about the environmental impact of car manufacturing creates a complex context where some automotive ads would work and others would backfire.

Current contextual targeting often can’t distinguish between these scenarios with sufficient nuance.

The result is ads appearing in contexts where a human buyer would immediately recognize the poor fit, but the algorithm sees only that the content matches the targeting parameters. We’ve traded human judgment for scale and efficiency, but we’ve also lost the contextual intelligence that determines whether attention translates to positive brand outcomes.

Building Context-Aware Optimization Into Programmatic Strategy

Solving this requires moving beyond simple keyword-based contextual targeting toward more sophisticated understanding of content sentiment, user intent, and cognitive state. Some platforms are developing these capabilities, but adoption remains limited because it adds complexity to campaign setup and reporting.

We need to start thinking about programmatic placements not just as opportunities to reach a target audience but as specific moments with their own characteristics.

What is the user trying to accomplish right now? What emotional state does the content create? How does our ad message fit into or disrupt that experience?

This level of contextual awareness can’t be fully automated yet, which means it requires more strategic oversight than most programmatic campaigns receive. We’ve gotten comfortable with set-it-and-forget-it optimization, but attention quality demands more active management of where and how ads appear.

A healthcare technology company was targeting IT decision-makers at hospitals with programmatic display ads for their patient data management system. Their audience targeting was precise, reaching the exact job titles and company types they needed.

But their ads kept appearing on news sites during coverage of healthcare data breaches and patient privacy violations. The contextual association was toxic. Every impression reinforced anxiety about data security rather than positioning their solution as trustworthy.

When they implemented negative contextual targeting to exclude content about breaches, hacks, and violations, their cost per qualified lead dropped by 35% despite reaching a smaller audience. The quality of contextual attention mattered more than the volume of audience-targeted impressions.

Attention Decay Across Different Programmatic Channels

Programmatic advertising spans multiple channels, each with distinct characteristics that affect how attention works. Display, video, native, audio, digital out-of-home. We tend to lump these together in reporting and strategy, but the attention dynamics differ so dramatically that treating them as equivalent is strategic malpractice.

Each channel creates a different cognitive relationship between the user and the content. These relationships determine how much attention is available, how long it can be sustained, and what type of processing is likely to occur.

Display Advertising’s Fundamental Attention Problem

Display ads face the most hostile attention environment of any programmatic channel. Users have spent two decades learning to ignore them.

Banner blindness is so pervasive that eye-tracking studies show users can navigate a webpage while their visual system actively suppresses display ad processing.

The format itself works against attention. Display ads are static (or minimally animated), peripheral to the content users actually want, and almost never contextually integrated into the page experience. They’re designed to be ignored, and users have obliged by developing sophisticated cognitive filtering.

Programmatic display campaigns can still work, but only when we acknowledge these attention constraints. Frequency matters more than reach because breaking through banner blindness requires repeated exposure. Creative needs to be instantly comprehensible because processing time is measured in fractions of a second.

Brand building through display requires accepting that each impression does minimal work and success comes from accumulation over time.

Most display strategies don’t account for these realities. We set frequency caps to avoid annoying users, which makes sense from a user experience perspective but undermines the repeated exposure needed to overcome attention filtering. We create creative that requires sustained viewing to understand, then wonder why it doesn’t perform.

Video’s Attention Advantage and How We’re Squandering It

Video advertising should have a massive attention advantage. Movement captures attention automatically through pre-attentive processing. Audio adds a second sensory channel. The format supports narrative and emotional engagement in ways display never can.

Programmatic video has largely squandered these advantages by prioritizing completion rates over attention quality.

Autoplay with sound off has become standard, which eliminates the audio channel entirely. Skippable formats create an adversarial dynamic where the first five seconds are spent trying to prevent the skip rather than communicating anything meaningful. Outstream video that plays in-article often appears with minimal context, creating confusion rather than engagement.

We’re treating video as just another impression type rather than recognizing its unique attention properties. Video can create sustained attention if the creative earns it, but most programmatic video creative is designed to survive the format constraints (autoplay, sound-off, skippable) rather than to leverage video’s strengths.

The completion rate metric exemplifies the problem. A user who watches your entire 30-second ad because they walked away from their device creates a perfect completion rate but zero attention. A user who watches five seconds with full attention then skips creates a terrible completion rate but potentially more brand impact.

We’re optimizing for the wrong outcome because we’re measuring the wrong thing.

Native Advertising’s Context Integration Opportunity

Native advertising works by mimicking the form and function of the surrounding content. Done well, it reduces the cognitive disruption that other ad formats create.

Users process native ads using the same attention mode they’re already in rather than having to shift modes to deal with an obvious advertisement.

Programmatic native has grown rapidly, but the quality varies wildly. Some native placements genuinely integrate into the content experience with relevant, valuable information that happens to be sponsored. Others are native in format only, using content recommendation widgets to serve clickbait that destroys trust the moment users click through.

The attention advantage of native depends entirely on maintaining the cognitive contract with the user. They’re processing content, your ad appears as content, and if it delivers on that expectation, attention flows naturally. Break that contract with misleading headlines or irrelevant content, and you’ve burned attention capital that’s hard to rebuild.

Programmatic native campaigns need different success metrics than other formats. Engagement time on the landing page matters more than click-through rate. Scroll depth and content completion indicate whether attention was sustained beyond the initial click. Bounce rate reveals whether the ad created false expectations that the content didn’t deliver on.

|

Programmatic Channel |

Attention Advantage |

Attention Challenge |

Optimal Creative Approach |

|---|---|---|---|

|

Display |

Low cost, broad reach |

Severe banner blindness, minimal processing time |

Ultra-simple visuals, bold brand presence, frequency over reach |

|

Video |

Movement captures attention, multi-sensory |

Sound-off default, skip behavior, completion doesn’t equal attention |

Front-load message, visual storytelling without audio dependency |

|

Native |

Contextual integration, reduced cognitive disruption |

Trust erosion from clickbait, high bounce risk |

Deliver genuine value, match content quality and tone |

|

Audio |

Exclusive attention during certain activities |

Easy to tune out, no visual support |

Repetition for recall, memorable audio branding, simple messaging |

|

DOOH |

Forced exposure, large format impact |

Brief attention windows, environmental distractions |

Instant comprehension, minimal text, striking visuals |

Audio and DOOH as Emerging Attention Opportunities

Programmatic audio and digital out-of-home are newer channels with attention characteristics we’re still figuring out. Both offer something increasingly rare: reduced competition for attention in specific moments.

Audio advertising reaches users during activities where visual attention is unavailable or occupied. Driving, exercising, household tasks. The audio channel has exclusive access to attention in these moments, which makes it incredibly valuable.

But audio also faces unique challenges. Users can tune out audio more easily than visual stimuli. Creative needs to work without visual support. Brand recall from audio-only exposure is lower than multi-sensory exposure.

Programmatic audio is growing fast, but creative strategy hasn’t caught up. We’re often repurposing visual campaign concepts into audio without considering how attention works differently in audio-only contexts. The result is ads that technically run but don’t leverage the unique attention opportunity the channel provides.

Digital out-of-home offers forced exposure in physical environments. Users can’t scroll past a billboard or close a screen in a subway station. But attention in these contexts is fragmented and brief.

People are in transit, focused on navigation, and processing their physical environment. DOOH ads need to work in seconds, not minutes, and need to communicate despite divided attention.

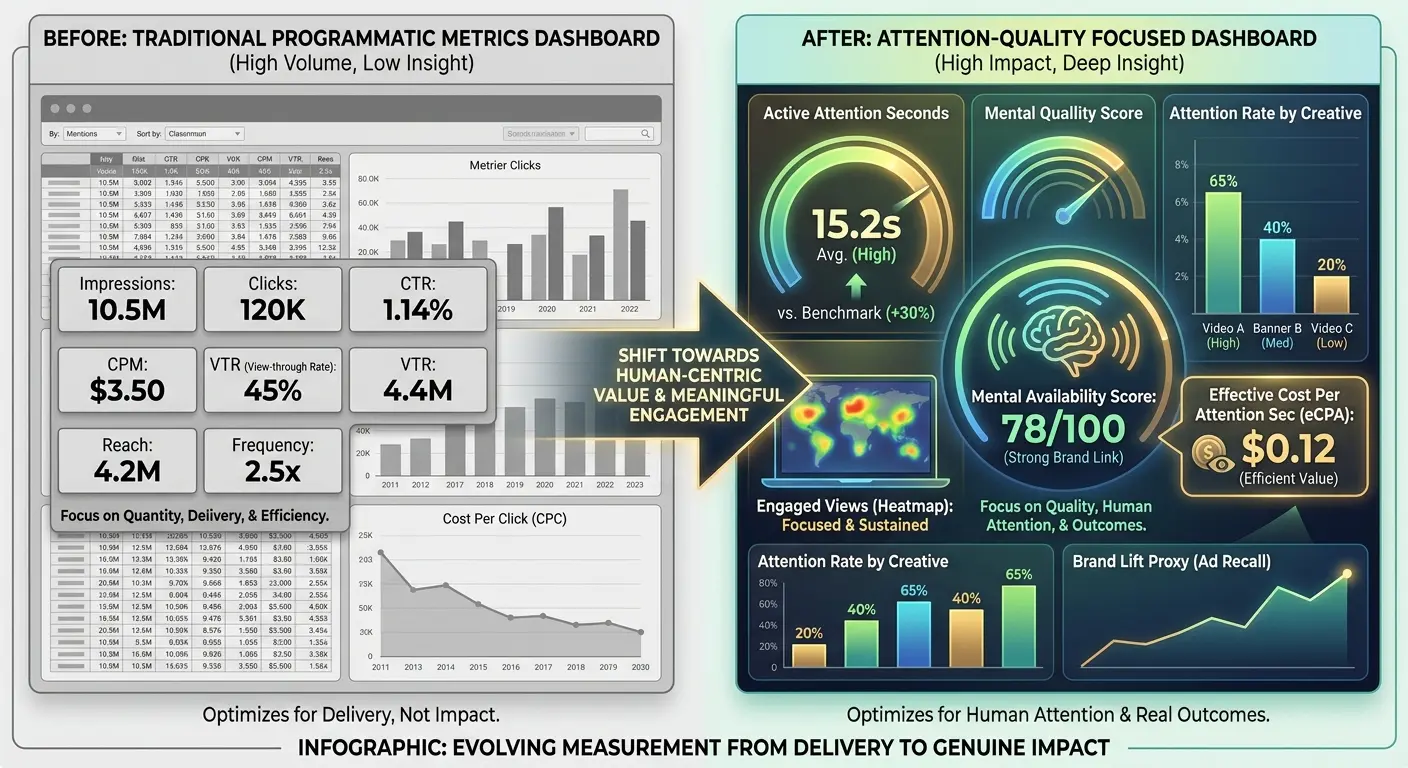

Measuring What Matters Beyond Impressions

The measurement frameworks we’ve inherited from traditional advertising and early digital don’t capture attention quality. Impressions, reach, frequency, viewability. These metrics describe delivery and potential exposure, but they don’t tell us what happened cognitively when the ad appeared.

Building better measurement requires defining what we actually mean by attention in programmatic contexts and then finding ways to track it that are both meaningful and scalable.

Attention Metrics That Actually Predict Outcomes

Several companies are developing attention measurement solutions that go beyond viewability. Eye-tracking studies translated into predictive models. Active attention metrics based on cursor movement and scroll behavior. Time-in-view measurements that distinguish between passive and active viewing.

These approaches are progress, but they also reveal how far we have to go.

Eye-tracking provides the richest data but doesn’t scale. Predictive models based on eye-tracking studies make assumptions that may not hold across contexts. Behavioral signals like cursor movement can indicate attention but can also be misleading (cursor position doesn’t always correlate with visual attention).

The most promising direction involves combining multiple signals to create probabilistic attention scores. Time-in-view plus scroll behavior plus page engagement plus contextual factors. No single signal tells the full story, but patterns across signals can indicate whether attention likely occurred and what quality that attention had.

We’re not getting perfect attention measurement at scale. The goal should be directionally accurate measurement that’s meaningfully better than viewability at predicting outcomes. That’s achievable with current technology, but it requires advertisers to demand it and be willing to act on it even when it means lower reported impression volumes.

Connecting Attention Metrics to Business Outcomes

Attention measurement only matters if it connects to business results. We need to establish whether higher-quality attention (however we define and measure it) produces better outcomes for advertisers.

Testing and attribution that most programmatic campaigns don’t currently do.

Brand lift studies can measure whether campaigns with higher attention scores produce greater awareness, consideration, or purchase intent. Sales attribution can track whether attention quality correlates with conversion rates. Multi-touch attribution models can weight touchpoints based on attention quality rather than treating all exposures equally.

The data is starting to show what we’d expect: attention quality predicts outcomes better than impression volume. Campaigns optimized for attention metrics rather than viewability often show better brand lift with fewer impressions. Higher attention placements drive more efficient conversion paths even when they cost more per impression.

But this data remains siloed and inconsistent. We need industry-wide standards and shared research to make attention measurement actionable for most advertisers. Right now, attention quality optimization requires custom measurement and testing that’s beyond the resources of many marketing teams.

Attention Quality Measurement Framework

I made this framework after auditing 30 campaigns. The pattern was obvious:

Pre-Campaign Setup

-

Define what attention success looks like for your specific campaign objective (awareness vs. consideration vs. conversion)

-

Establish baseline metrics: current viewability rates, time-in-view averages, engagement rates by channel

-

Identify which attention signals are trackable within your current tech stack

-

Set up brand lift study methodology if budget allows

Active Campaign Monitoring

-

Track time-in-view distribution (not just averages; look at the full distribution curve)

-

Monitor scroll behavior: percentage of users who pause scrolling when ad appears

-

Measure interaction signals: hover events, volume-on actions for video, cursor movement patterns

-

Analyze completion patterns: where users drop off in video content

-

Compare attention signals across different contextual environments

Post-Campaign Analysis

-

Correlate attention metrics with conversion data to identify quality thresholds

-

Segment performance by context type, not just audience segment

-

Calculate attention-adjusted CPM (what did you actually pay for high-quality attention?)

-

Document which creative variations performed best in which attention environments

-

Build learnings into next campaign’s targeting and creative strategy

Building Attribution Models Around Attention Quality

Current attribution models treat impressions as binary. The user either saw the ad or didn’t. Multi-touch attribution weights touchpoints based on position in the conversion path, but it doesn’t account for the quality of exposure at each touchpoint.

Attention-based attribution would weight touchpoints based on the likelihood that meaningful cognitive processing occurred.

A viewable impression in a high-attention context would receive more credit than a viewable impression in a low-attention context. An impression with strong attention signals would count more than an impression that barely met viewability thresholds.

Technical challenges exist. Attribution already struggles with data fragmentation and identity resolution. Adding attention quality as a variable compounds the complexity. But the alternative is continuing to credit impressions that did nothing while undervaluing impressions that drove real cognitive impact.

The technology exists to build attention-weighted attribution models. What’s missing is adoption and standardization. Advertisers need to demand it, platforms need to support it, and the industry needs to agree on frameworks that make results comparable across campaigns and vendors.

Restructuring Your Programmatic Stack for Attention Quality

Shifting from impression-based to attention-based programmatic strategy requires changes throughout your technology stack and operational processes. You can’t just flip a switch.

You need to rethink how you plan, execute, measure, and optimize campaigns.

Choosing Platforms and Partners That Support Attention Measurement

Not all programmatic platforms and DSPs offer the same capabilities for attention measurement and optimization. Some have integrated attention metrics from third-party providers. Others are developing proprietary attention scoring. Many still operate purely on traditional metrics.

Evaluating platforms for attention capabilities means asking specific questions.

What attention signals do they track beyond viewability? How do they define and measure attention quality? Can you optimize campaigns toward attention metrics rather than just viewability or CTR? What reporting do they provide on attention performance?

The answers vary, and cutting-edge attention measurement may not be available across all the inventory sources you need. This creates a transition challenge. You might need to run hybrid strategies where some campaigns optimize for attention quality while others still rely on traditional metrics because the platform capabilities aren’t there yet.

Supply path optimization takes on new importance in an attention-focused strategy. The shortest path to inventory isn’t necessarily the path that delivers the best attention quality. Some SSPs and exchanges prioritize inventory quality in ways that correlate with attention. Others optimize purely for scale and efficiency.

Restructuring Creative Production for Context Variability

Attention-based programmatic requires more creative variations than most brands currently produce. You need different creative for different cognitive contexts, different attention environments, and different levels of existing cognitive load.

This doesn’t mean producing hundreds of unique ads. It means creating modular creative systems where elements can be recombined based on placement context. Simple, direct versions for high-load environments. More complex, narrative versions for low-load environments. Variations that work with and without sound. Formats optimized for different attention durations.

Dynamic creative optimization tools can help, but they need to be directed by strategic thinking about attention. The technology can swap elements and test variations, but it can’t independently understand cognitive load or attention quality. That requires human strategy informing the automation.

Production processes need to change too. Creative teams need briefs that include attention context, not just audience demographics and campaign messages. They need to understand where and how the creative will appear, what cognitive state users will be in, and what attention constraints exist.

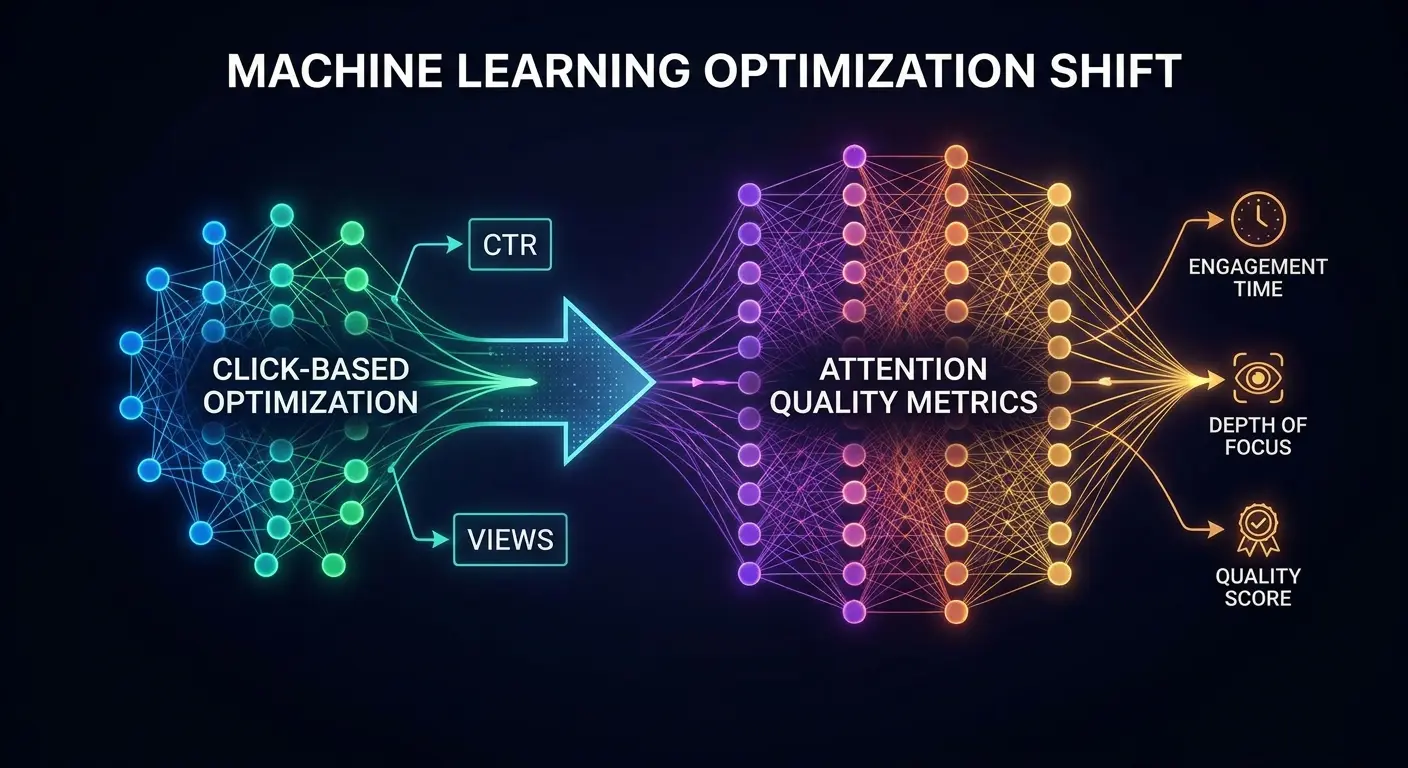

Training Algorithms to Optimize for Attention Instead of Clicks

Machine learning algorithms optimize toward whatever goal you set. Most programmatic campaigns optimize toward clicks, conversions, or viewability because those are the metrics we’ve historically tracked.

Shifting to attention-based optimization means retraining algorithms with new goals.

You need attention metrics that are trackable at scale and available in real-time. If your attention measurement comes from post-campaign analysis, you can’t use it for optimization. You need attention signals that can inform bidding decisions in the moment.

Some platforms are starting to offer this. Attention prediction models that score inventory in real-time. Bidding strategies that target high-attention placements even when they cost more per impression. Optimization algorithms that balance attention quality against volume and efficiency.

The transition period is awkward. Algorithms optimized for attention will often show worse performance on traditional metrics. Impression volumes might drop. Viewability rates might not improve as much. Click-through rates could decline.

But if attention quality is actually higher, business outcomes should improve even as vanity metrics suffer.

Changing Reporting and Success Metrics Across Stakeholders

The hardest part of shifting to attention-based programmatic isn’t technical. It’s organizational.

Stakeholders are used to certain metrics. Reports follow established templates. Success gets defined by numbers everyone understands, even if those numbers don’t predict business results.

Introducing attention metrics means educating stakeholders on why they matter and how to interpret them. It means changing reports to emphasize new metrics while de-emphasizing old ones. It means resetting expectations about what good performance looks like.

Build the business case with data. Show the correlation between attention metrics and business outcomes. Demonstrate that campaigns optimized for attention quality delivered better brand lift or more efficient conversion paths. Make the abstract concept of attention concrete through results.

You’ll need to maintain some traditional metrics during the transition because stakeholders need continuity. But gradually shift the emphasis. Lead with attention metrics in reporting. Explain optimization decisions in terms of attention quality. Celebrate wins that show attention-focused strategies outperforming volume-focused ones.

Final Thoughts

Programmatic advertising has solved the technical challenge of ad delivery at scale. We can find audiences, serve personalized creative, and measure exposure with incredible precision.

What we haven’t solved is ensuring that the exposure we’re paying for actually creates meaningful cognitive engagement.

The attention quality gap is the biggest opportunity in programmatic advertising right now. Brands that figure out how to measure, optimize, and buy attention rather than just impressions will see dramatically better returns from their programmatic spend. Those that continue optimizing for viewability and volume will keep wondering why their metrics look good but business results disappoint.

This shift won’t happen overnight. Attention measurement is still evolving. Platform capabilities vary. Organizational inertia is real.

But the direction is clear. Impressions and viewability were necessary first steps, but they’re not sufficient measures of programmatic effectiveness. Attention quality is what actually drives outcomes, and it’s time our strategies and measurement caught up to that reality.

Is this easy to implement? Hell no. Most DSPs don’t even support attention-based bidding yet. You’ll need custom measurement, patient stakeholders, and probably a bigger budget for testing. But the alternative is continuing to waste money on “viewable” impressions that nobody sees.

Here’s what actually changes: You stop optimizing for viewability. You start paying 3x more per impression for contextually relevant placements. Your impression volume drops by 60%. Your boss panics. And your brand lift doubles.

That’s the trade. The brands winning in programmatic aren’t buying more impressions. They’re buying better attention.

Make the shift before your competitors do.